Agentic AI is one of the most discussed concepts in technology right now, but most explanations stop at the surface level. They tell you that agentic AI can “autonomously complete tasks” or “act on your behalf,” without ever explaining the actual mechanics behind that capability.

This article goes deeper. If you want to understand not just what agentic AI does but how it actually works, the components, the processes, the loops, and the logic, this is the guide for you.

Start With the Right Mental Model

Before diving into the technical details, it helps to establish the right mental model.

A traditional AI interaction looks like this: you send a message, the model generates a response, the interaction ends. It is a single turn. The model produces one output and stops.

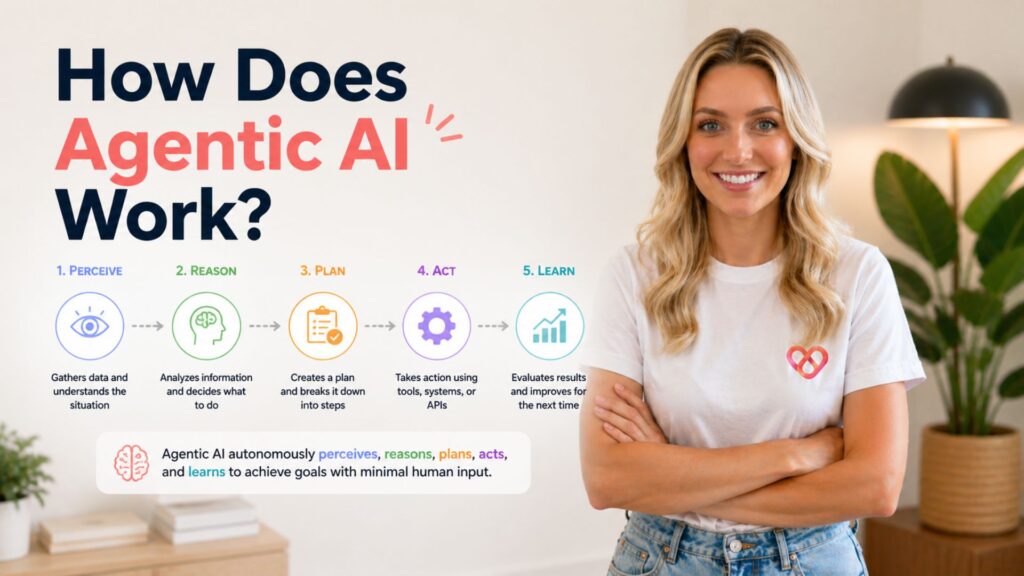

An agentic AI interaction looks fundamentally different. You give the system a goal, something like “research the top five competitors in this market and produce a formatted report.” The system then enters a continuous loop: it plans, it acts, it observes what happened, it decides what to do next, and it keeps going until the goal is achieved or it determines it cannot proceed.

That loop, plan, act, observe, decide, is the heart of how agentic AI works. Everything else is built around enabling and sustaining that loop reliably.

The Core Components

To understand how agentic AI works, you need to understand the building blocks that make it possible. Most agentic systems share the same fundamental architecture, even if the specific implementations differ.

1. The Language Model: The Reasoning Engine

At the center of every modern agentic AI system is a large language model. As covered in our article on what is agentic AI, the LLM serves as the brain of the agent, the component responsible for understanding goals, reasoning through problems, generating plans, interpreting results, and deciding what to do next.

The LLM does not execute actions directly. It reasons about what actions should be taken and generates structured outputs, often in the form of function calls or tool invocations, that trigger those actions. Think of it as a highly capable planner and decision-maker sitting at the center of a system that can interact with the world.

2. Tools: The Hands of the Agent

If the LLM is the brain, tools are the hands. Tools are the mechanisms through which an agentic AI system interacts with the world beyond its own internal knowledge.

Common tools in agentic systems include:

- Web search, retrieving current information from the internet

- Code execution, writing and running code in a sandboxed environment

- File reading and writing, accessing and modifying documents, spreadsheets, and data files

- API calls, interacting with external services like calendars, email clients, databases, and third-party platforms

- Browser control, navigating websites and interacting with web interfaces

- Memory retrieval, querying stored information from previous sessions or external knowledge bases

The agent decides which tool to use, constructs the appropriate input, calls the tool, and receives the output, which it then uses to inform its next decision.

3. Memory: Continuity Across Steps

One of the defining features of agentic AI is the ability to operate across multiple steps over time. This requires memory, the ability to retain and retrieve relevant information throughout the course of a task.

Agentic systems typically use several types of memory:

In-context memory is the information currently held in the model’s active context window, everything the agent has seen, done, and received during the current session. This is the most immediate form of memory but is limited by the context window size.

External memory extends beyond the context window by storing information in an external database or vector store that the agent can query as needed. This allows agentic systems to access far more information than could fit in a single context window.

Episodic memory stores records of past actions and their outcomes, allowing the agent to learn from previous experience within a longer-running workflow.

4. The Planning Layer

Agentic AI does not just react to a goal, it reasons about how to achieve it. The planning layer is where the agent breaks a high-level objective down into a structured sequence of sub-tasks.

This process involves several techniques:

Task decomposition, breaking a complex goal into smaller, manageable steps. Instead of trying to accomplish everything at once, the agent identifies the logical sequence of actions required to reach the objective.

Chain-of-thought reasoning, the agent reasons through problems step by step, making its logic explicit before committing to an action. This improves decision quality and makes the agent’s behavior more predictable and auditable.

Reflection and self-critique, after completing a step or reaching an intermediate result, the agent evaluates whether it is on track. If the result is not what was expected, it can revise its plan before proceeding.

5. The Feedback Loop

This is perhaps the most important structural feature of agentic AI, and the one that most distinguishes it from non-agentic systems. As explored in our piece on what does agentic mean, the concept of agency fundamentally involves perceiving the environment and adapting behavior based on what is observed.

In practice, the feedback loop works like this:

- The agent takes an action, calling a tool, executing code, retrieving information

- The action produces an output, a search result, a code execution result, an API response

- The agent observes that output and incorporates it into its context

- The agent reasons about what the output means and what should happen next

- The agent decides on the next action and executes it

- The loop repeats until the goal is achieved

This continuous perception-action-observation loop is what allows agentic AI to navigate complex, multi-step tasks that no single prompt could accomplish.

The Execution Flow: Step by Step

To make this concrete, here is how an agentic AI system would handle a realistic task: “Find the three most-cited papers on agentic AI from the past year, summarize each one, and save the summaries to a document.”

Step 1, Goal interpretation. The LLM receives the goal and interprets what is being asked. It identifies the key requirements: recency (past year), source type (academic papers), metric (citation count), output (summaries in a document).

Step 2, Planning. The agent decomposes the task: search for recent highly-cited papers → evaluate results → select top three → read each paper → write summaries → create and save document.

Step 3, Tool use: web search. The agent calls a web search tool with an appropriate query. It receives a list of results and evaluates them against the criteria.

Step 4, Iteration. The initial search may not return perfectly relevant results. The agent refines its query, runs additional searches, and compares results until it has identified three strong candidates.

Step 5, Content retrieval. The agent fetches the content of each paper, either through a web fetch tool, a PDF reader, or an academic database API.

Step 6, Summarization. The LLM reads each paper and generates a structured summary, applying consistent formatting across all three.

Step 7, Document creation. The agent calls a file-writing tool to create a document, formats the summaries, and saves the output.

Step 8, Verification. The agent reviews the completed document against the original goal to confirm the task is complete.

Each of these steps involves the core loop: plan, act, observe, decide. The agent is not following a fixed script, it is reasoning at each step about what to do next based on what it has observed.

How Agentic AI Handles Failure

One of the most important and underappreciated aspects of how agentic AI works is its ability, or inability, to handle failure gracefully.

When a step in an agentic workflow fails, a well-designed agent does not simply stop. It recognizes the failure, reasons about what went wrong, and attempts an alternative approach. This might mean retrying with different parameters, using a different tool, breaking the problem down differently, or escalating to a human when it determines it cannot proceed on its own.

This is also where many current agentic systems struggle. As we discussed in is agentic AI just hype, one of the core challenges with agentic AI today is that errors in early steps can compound through subsequent steps, and not all agents handle unexpected situations reliably. The robustness of failure handling is one of the key dimensions that separates mature agentic systems from brittle ones.

Single-Agent vs. Multi-Agent Architectures

Not all agentic AI systems operate as a single agent working through a task alone. Many advanced systems use multi-agent architectures, networks of specialized agents that collaborate to complete complex goals.

In a multi-agent system, different agents may be responsible for different aspects of a task. An orchestrator agent might manage the overall workflow and delegate sub-tasks to specialist agents, one for research, one for writing, one for code execution, one for quality review. Each agent operates within its area of responsibility, passing outputs to the next agent in the pipeline.

This architecture allows for greater specialization, parallelization, and scalability, but also introduces new challenges around coordination, communication, and error propagation between agents.

The Role of Protocols and Standards

One development that is significantly shaping how agentic AI works in practice is the emergence of standardized protocols for agent-tool communication.

The Model Context Protocol (MCP), developed by Anthropic, is one of the most important of these. MCP provides a standardized interface through which AI agents can connect to tools, data sources, and external services, similar to how USB standardized how devices connect to computers. Rather than building custom integrations for every tool an agent might need, developers can build MCP-compatible tools that any MCP-compatible agent can use.

This standardization is accelerating the development of agentic AI by making it dramatically easier to extend what agents can do and ensuring that tools built by different developers can work together reliably.

Why Understanding the Mechanics Matters

Knowing how agentic AI works is not just an intellectual exercise. It has practical implications for anyone building with, deploying, or evaluating agentic systems.

Understanding the feedback loop explains why agentic tasks take longer and cost more than single-turn interactions, and why that cost is often justified by the quality of the output. The planning layer explains why well-specified goals produce dramatically better results than vague ones. The failure handling explains why human oversight remains important even in highly automated agentic workflows.

As explored in is agentic AI the same as generative AI, the agentic layer adds genuine complexity on top of generative capabilities. That complexity has a logic to it that becomes clear once you understand the underlying architecture.

Agentic AI works because it combines a powerful reasoning engine with the ability to act, observe, and adapt, in a continuous loop, across as many steps as a task requires. That is the mechanism. Everything else is implementation detail.